The question of whether artificial intelligence (AI) can think like a human is multifaceted and touches on philosophy, cognitive science, and computer science. Here’s a breakdown:

Definition of Thinking:

What does it mean to “think”? If by thinking we mean the ability to process information, make calculations, and produce outputs based on inputs, then AI can think. But if we mean introspection, consciousness, and emotional reflection, then current AI does not think like a human.

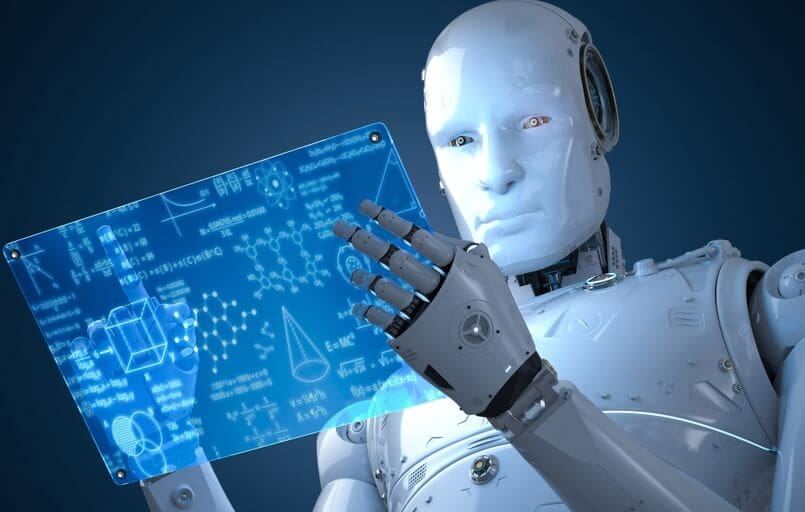

Task-specific Expertise:

Modern AI, particularly those built on neural network architectures, excel at specific tasks when given vast amounts of data. For instance, they can recognize images, transcribe speech, or play board games at superhuman levels. However, their “thinking” is limited to the patterns they’ve been trained on.

Generalization:

Humans excel at generalizing knowledge from one domain to another, often making intuitive leaps. While certain AI models can generalize across tasks they’ve been trained on, they don’t generalize in the broad, flexible manner humans do.

Consciousness and Sentience:

AI lacks consciousness, self-awareness, and sentience. While we can design an AI to say “I am aware,” this is just a programmed response, not an indication of genuine self-awareness or inner experience.

Emotion and Intuition:

Humans often base decisions on emotions, intuitions, or subconscious processes, which are intertwined with our biology and experiences. AI doesn’t have emotions, and while it can be programmed to emulate emotional responses or simulate intuition (by making decisions based on patterns without explicit instructions), it doesn’t “feel” or “intuit” in the human sense.

Learning Process:

A child learns about the world through a combination of innate mechanisms and interactions with their environment, gradually developing reasoning skills, common sense, and a vast array of background knowledge. Current AI, on the other hand, often requires large datasets and explicit training to learn specific tasks. Some AI research, like developmental robotics, aims to replicate more human-like learning processes, but it’s still in early stages.

Creativity:

While AI can generate novel content, such as art, music, or even stories, its “creativity” is based on patterns in the data it was trained on. It doesn’t have intent or a sense of novelty in the way humans understand it.

Ethical and Moral Reasoning:

Human thinking is often influenced by ethical, moral, and cultural considerations. While we can program AI to follow certain ethical guidelines, or make decisions based on ethical frameworks, these are provided by humans. AI doesn’t have an inherent sense of right or wrong.

In conclusion, while AI can simulate certain aspects of human cognition, and in some areas outperform humans, it doesn’t think “like” a human in a holistic or conscious sense. The intricacies of human cognition, emotion, consciousness, and morality remain areas of exploration and differentiation when comparing machines to us.