Gemini Robotics 1.5: Making Robots Smarter

Google DeepMind has introduced robots that “think before they act” with Gemini Robotics. Here are the details of the new generation of AI-powered robots.

Generative AI systems that create text, images, audio, or video have become a part of daily life. Similarly, these models can now generate not just content, but also robot behaviors. This very idea forms the foundation of Google DeepMind’s Gemini Robotics project. Two new models developed within the scope of this project enable robots to “think before taking action.”

The Limited World of Traditional Robots

Traditional robots are programmed with lengthy training sessions only for specific tasks, and as a result, they fail at other jobs. Carolina Parada, head of the robotics division at Google DeepMind, notes that most robots today can perform only a single task, even after months of preparation.

However, Generative AI has the power to change this picture. This is because these systems possess the flexibility to work in new environments and with new tasks without requiring reprogramming.

Two Models, One Goal: Robots that Think and Act

DeepMind’s new approach relies on two separate models:

- Gemini Robotics-ER 1.5 (Embodied Reasoning): This model processes visual and text inputs to plan the steps required to complete a task. It is the “thinking” model.

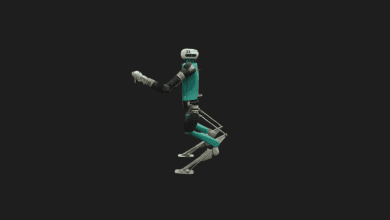

- Gemini Robotics 1.5: This model takes the instructions generated by the ER model and converts them into real robot movements. It is the “acting” model.

Thanks to this duo, robots can develop smarter solutions for multi-step and complex tasks.

The Robot’s New Intuition

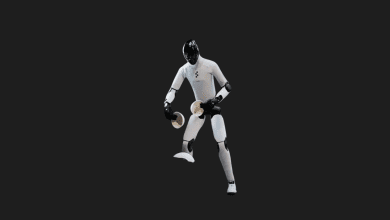

Gemini Robotics-ER 1.5 adapts the reasoning capability we see in modern chatbots to robots. For instance, when laundry needs to be sorted by color, it analyzes the image of the environment and outlines the necessary steps. These steps are then converted into actual movements by Gemini Robotics 1.5.

According to DeepMind researcher Kanishka Rao, the greatest advancement is that robots now adopt the “think first, then act” approach. This can be interpreted as a parallel to human intuition.

The new systems are built upon the core Gemini foundation models and have been specially trained to adapt to physical environments. This allows the robots to work on a wider range of tasks without being limited to a single one.

Moreover, the learned information can be transferred to different types of robots. For example, skills acquired with the arms of Aloha 2 can be applied to the humanoid robot named Apollo without the need for additional training.

Still Far from Daily Use

Although this is an exciting development, it will take time before we see “home robots that do the laundry.” Gemini Robotics 1.5 is currently only open to select trusted testers. However, the thinking model, Gemini Robotics-ER 1.5, has started to be offered to developers via Google AI Studio. This allows researchers to utilize this technology in their own robot experiments.