Floating AI: How the Ocean Could Power the Next Generation of Data Centers

I have been watching the AI boom unfold over the last couple of years, and while everyone is obsessing over how smart these models are getting, I keep thinking about something entirely different: the electric bill.

Seriously, every time a new, massive language model drops, I can almost hear the power grids groaning. The sheer amount of electricity required to train and run generative AI is staggering, and as a tech enthusiast, I find the infrastructure problem just as fascinating as the software itself. Governments and massive corporations are throwing trillions of dollars at terrestrial data centers, and some folks are even pitching data centers in space.

But while researching the latest infrastructure trends, I stumbled upon an idea that genuinely stopped me in my tracks. Instead of looking up at the stars, what if we looked out at the ocean?

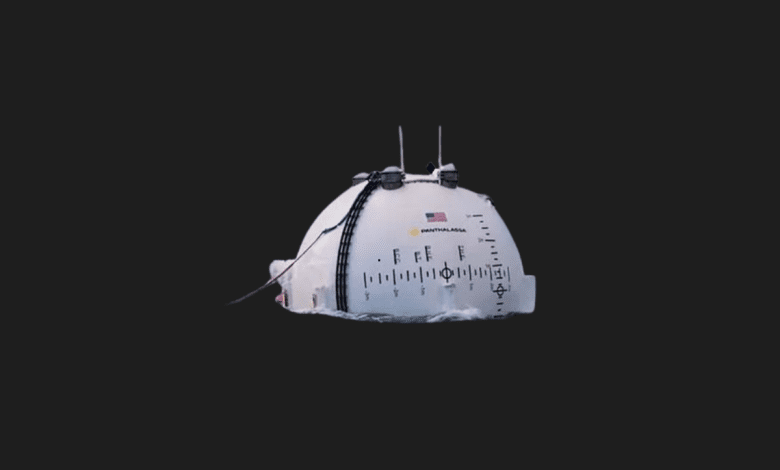

Enter Panthalassa, a US-based renewable energy and ocean tech company that just secured a massive $140 million funding round, pushing their valuation to nearly $1 billion. And with Peter Thiel backing them, this isn’t just a sci-fi concept anymore—it’s a very real, very aggressive play to move the brain of AI into the open sea. Let’s break down why I think this could be the craziest, yet most logical, shift in tech hardware we’ve seen in a decade.

Why the Land is Running Out of Power

Before we dive into the ocean, we need to understand the crisis on land. The computational hunger of AI isn’t just growing linearly; it’s exploding.

- Massive Energy Draw: Running a prompt through an advanced LLM takes significantly more power than a standard Google search. Multiply that by billions of daily queries, and you have a massive energy bottleneck.

- The Grid Limit: Our current power grids simply aren’t built to handle the sudden, massive spikes in demand from hyper-scale data centers sprouting up in rural areas.

- The Real Estate Problem: Building these facilities requires massive plots of land, complex zoning approvals, and an uninterrupted, heavy-duty power supply.

I look at this and think: we are trying to force a 21st-century technological miracle through a 20th-century electrical grid. It’s a recipe for throttling innovation.

Panthalassa and the Ocean-3 Concept: Surfing the Compute

This is where Panthalassa’s vision comes in, and frankly, I find their approach incredibly elegant. They aren’t trying to build a traditional data center and just plug it into a wave-power generator near the shore. They are rethinking the entire architecture.

Their flagship platform, the Ocean-3, consists of autonomous, floating units deployed in the open ocean. Here is how they plan to pull this off:

- Direct Wave Energy: These platforms harness the kinetic energy of ocean waves to generate electricity continuously. The ocean never stops moving, meaning the power source is effectively infinite and 100% renewable.

- Zero Transmission Loss: Here is the genius part that really caught my attention: they aren’t sending the power back to land. Transporting electricity via massive undersea cables is expensive and inefficient. Instead, they use the generated power immediately to run AI chips located right there on the platform.

- Satellites for Output: The heavy lifting (the data processing) happens on the water. The platform then takes the finalized results—the AI tokens—and beams them back to land via satellite connection.

It’s an entirely closed loop. You put a box in the water, it powers itself, does the math, and beams you the answer.

The Ultimate Hack: Free, Infinite Cooling

If you’ve ever stood inside a server room, you know it sounds like a jet engine and feels like a refrigerator. Heat is the ultimate enemy of computing. In traditional data centers, keeping the servers cool often consumes almost as much energy as running the processors themselves.

By moving to the open ocean, Panthalassa is tapping into the ultimate thermal heat sink.

- Natural Liquid Cooling: The platforms are surrounded by endless, cold seawater. By utilizing this environment, the massive engineering hurdle of heat management is solved naturally and, more importantly, for free.

- Extended Hardware Lifespan: Better, more consistent cooling means less thermal throttling for the GPUs and a longer operational lifespan for the hardware.

- Slashed Operational Costs: Without the need for massive industrial HVAC systems, the day-to-day running costs plummet.

Panthalassa’s CEO, Garth Sheldon-Coulson, claims that with this setup, they can produce electricity at roughly $0.02 per kilowatt-hour. If they can actually hit that metric, the ocean instantly becomes the cheapest energy source on the planet for AI computation.

Reality Check: The Ocean is Unforgiving

As much as I love the elegance of this solution, I have to play devil’s advocate. I wouldn’t want to be the IT technician who has to take a boat out in a Category 4 storm to hot-swap a faulty GPU rack. The open sea is a notoriously hostile environment for delicate electronics.

Here are the massive hurdles they still need to overcome:

- Saltwater Corrosion: The air in the open ocean is loaded with salt. Keeping that corrosive moisture away from highly sensitive AI chips is going to require military-grade sealing and constant maintenance.

- Biofouling: Barnacles, algae, and marine life will inevitably cover these platforms. How will this affect the wave-generation mechanics over time?

- Mechanical Stress: Continuous wave action is great for power, but terrible for structural integrity. The constant rocking and pounding will test the physical limits of server racks.

- Satellite Latency: While beaming tokens via satellite saves on laying cables, it introduces latency. For training massive models, this might be fine. But for real-time, low-latency AI inference (like autonomous driving networks or high-frequency trading), that satellite delay could be a dealbreaker.

When Do We See Floating Servers?

Despite my reservations about the harsh environment, the timeline is moving fast. Panthalassa isn’t starting from scratch; they’ve been iterating on this for nearly a decade. Their earlier prototypes (Ocean-1, Ocean-2, and Wavehopper) successfully proved the core concept between 2021 and 2024.

Now, the plan is to deploy the first commercial pilot platforms of the Ocean-3 series in the North Pacific sometime in 2026. If everything goes according to plan, they aim for full commercial availability by 2027.

Having a heavyweight like Peter Thiel in their corner isn’t just about the money; it brings a level of credibility and access to government and defense contracts (something Thiel is very familiar with via Palantir) that could push this over the finish line.

Final Thoughts

I honestly believe we are looking at a critical pivot point in infrastructure. We can’t keep paving over acres of land and burning coal to generate funny AI images or write code. We have to look for extreme solutions, and putting server racks in the middle of the Pacific Ocean is exactly the kind of radical thinking the industry needs right now.

I’ll be watching Panthalassa very closely over the next couple of years. If they can solve the corrosion and latency issues, they might just redefine the architecture of the internet.

What do you guys think? If you were running an AI startup, would you trust your multi-million dollar training models to a floating server farm in the middle of the ocean, or does the idea of a storm wiping out your data terrify you too much? Let me know!