The Era of Synthetic Humans: Are We Living in a Blade Runner Reality?

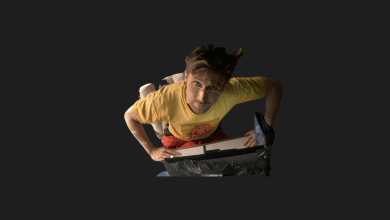

Honestly, I have to confess something. For years, I covered robotics news with a sense of childlike wonder. “Look at that robot do a backflip!” or “Wow, it can hold an egg without breaking it!” It was cool, it was mechanical, and—most importantly—it was clearly not human.

But recently, while watching the latest demonstration of a humanoid robot powered by a Generative AI brain, that feeling of wonder shifted into something else. It was a slight chill down my spine.

We are no longer just building helpful machines to carry boxes in a warehouse. We are sprinting toward the creation of synthetic clones. When I look at the current trajectory, I can’t help but ask: Are we physically building the cast of a Blade Runner movie, not for a sci-fi blockbuster, but for our actual streets?

In this article, I want to dive deep into the “Visual Turing Test,” why the “Uncanny Valley” is disappearing, and what it really means for us when we can’t tell who is “real” anymore.

From Clunky Metal to “Synthetic Souls”

Remember when robots were just giant arms in car factories? Those days are ancient history. What we are seeing now with companies like Boston Dynamics, Tesla (Optimus), and Figure is a fundamental shift in design philosophy.

The goal isn’t just utility anymore; it’s mimicry.

When I look at the fluid movements of the latest humanoids, two things stand out to me that scream “the future is here”:

- Micro-Movements: It’s not the walking that impresses me; it’s the fidgeting. A robot that stands perfectly still looks like a machine. A robot that shifts its weight, blinks randomly, or tilts its head while “thinking” looks frighteningly alive.

- The Skin Texture: We are moving past white plastic shells. Researchers are developing synthetic skin that mimics the warmth, elasticity, and even the imperfections of human skin.

Why does this matter? Because once you combine realistic skin with fluid, imperfect movement, you stop seeing a tool. You start seeing a being.

The Visual Turing Test: A New Threshold

We all know the classic Turing Test: Can a machine fool a human into thinking it’s human through text? Thanks to LLMs (Large Language Models), we’ve basically passed that.

But now, we are facing the Visual Turing Test.

Imagine this scenario: It’s the year 2035. You are sitting in a coffee shop. The person at the table next to you is reading a book. They sip their coffee, they look out the window, they sigh.

Are they biological? Or are they synthetic?

If we reach a point where you need to physically touch them—or worse, see them bleed—to know the answer, society changes overnight. I believe we are much closer to this reality than most people are willing to admit. The hardware is catching up to the software at a terrifying pace.

The “Uncanny Valley” is Being Filled

For decades, roboticists feared the “Uncanny Valley”—that creepy feeling we get when something looks almost human but is slightly off (like a zombie or a bad wax figure).

However, looking at the latest iterations of robots like Ameca, I think we are climbing out of that valley. When a robot can furrow its brow in confusion or smile with genuine warmth (even if programmed), our brains are hacked. We are hardwired to empathize with things that look like us.

The Brain Behind the Face

Of course, a realistic body is just a mannequin without a brain. This is where the integration of advanced AI models changes everything.

I’ve played around with plenty of chatbots, but giving those bots a physical body creates a completely different dynamic.

- Spatial Awareness: These robots aren’t just reciting Wikipedia; they are learning to understand the physical world. They know that a glass is fragile and a rock is not.

- Contextual Memory: Imagine a robot butler that remembers you had a bad day yesterday and asks you about it today with a sympathetic tone.

It sounds helpful, right? But it also raises a massive philosophical flag for me. If a machine looks like a human, acts like a human, and “remembers” like a human… at what point do we stop treating it as an appliance?

Are We Ready for the Social Impact?

This isn’t just about cool tech; it’s about how we live. I often think about the economic and social shockwaves this will cause.

- The Trust Deficit: In a world of deepfakes, we already struggle to trust what we see on screens. Soon, we might struggle to trust what we see on the street.

- The Loneliness Epidemic: It’s a dark thought, but if synthetic humans are perfect companions—never arguing, always listening, perfectly beautiful—will humans stop dating each other? Why deal with the messiness of human relationships when you can have a custom-tailored synthetic partner?

- Labor Shift: It’s not just blue-collar jobs. Service roles, receptionists, care workers for the elderly… if a robot can do it with a smile and never get tired, the job market is going to look radically different.

Conclusion: Evolution or Replacement?

I don’t write this to scare you. I write this because I am fascinated and, frankly, a little overwhelmed by the speed of it all.

We are not just coding software; we are coding our own reflection. The line between “born” and “made” is about to get very blurry. Whether this leads to a utopia where robots do all the work, or a crisis of identity for the human race, depends on the choices we make now.

But one thing is certain: The sci-fi future we watched in movies isn’t 100 years away. It’s knocking on the door.

I really want to hear your perspective on this: If you couldn’t tell the difference between a human and a robot on the street, would it bother you? Or do you think it doesn’t matter as long as they are nice?

Let’s discuss in the comments below.