Google’s TurboQuant: The Algorithm Changing AI Memory Forever

If you have ever tried running a large language model locally on your own computer, you already know the immediate, painful reality: these things will aggressively devour every single gigabyte of RAM you own. I’ve spent countless hours trying to optimize local AI setups, and the memory bottleneck is always the wall you hit.

But when I was reading through Google’s latest research blog this morning, I actually sat up in my chair. Google has just quietly announced a new compression technology called TurboQuant, and it fundamentally changes the math on how artificial intelligence consumes hardware.

We are talking about 6 times less memory usage and 8 times faster processing speeds—all without making the AI “dumber.” I hate throwing around the word “revolution,” but this is exactly the kind of breakthrough we need to take AI out of massive, expensive server farms and put it directly into our pockets. Let’s break down exactly what Google just pulled off.

The Bottleneck We’ve All Been Ignoring: The KV Cache

To understand why TurboQuant is such a big deal, we have to talk about how AI actually “remembers” your conversation.

Large Language Models (LLMs) don’t read words like we do; they convert concepts into high-dimensional vectors (massive strings of numbers). To avoid recalculating the meaning of every word every single time you ask a follow-up question, the AI uses something called a Key-Value (KV) cache. Think of the KV cache as the AI’s digital cheat sheet.

Here is the problem:

- As your conversation gets longer, that cheat sheet gets massive.

- These vectors contain hundreds or thousands of parameters.

- Storing them requires a ridiculous amount of high-speed memory.

Historically, developers have tried to fix this using a method called quantization—which basically means squeezing the data into a lower resolution. It saves space, but the nasty side effect is that the AI starts hallucinating or giving lower-quality answers. It was always a forced compromise. Until now.

Enter TurboQuant: How Google Did the Impossible

According to their initial tests, Google’s TurboQuant completely bypasses this compromise. It shrinks the model’s memory footprint dramatically without degrading the quality of its output.

How did they do it? They split the compression process into two incredibly clever steps.

Step 1: PolarQuant and the Geometry of Language

The first phase is a system they call PolarQuant, and the logic behind it is brilliant.

Normally, AI vectors are plotted using standard Cartesian (XYZ) coordinates. But PolarQuant takes those heavy, complex coordinates and translates them into polar coordinates. Instead of tracking a massive grid, every vector is suddenly represented by just two simple pieces of information:

- Radius: The strength or magnitude of the data.

- Angle: The semantic direction (the actual meaning) of the data.

Google used a perfect analogy for this: Traditional XYZ mapping is like telling someone, “Walk 3 blocks East, then 4 blocks North.” PolarQuant changes the instruction to, “Turn 37 degrees and walk 5 blocks.” It is a shorter, cleaner, and vastly more efficient way to store the exact same destination.

Step 2: The “QJL” Safety Net

Of course, aggressively compressing data like this usually creates slight deviations or “glitches” in the AI’s understanding. To fix this, Google implemented a second layer called the Quantized Johnson-Lindenstrauss (QJL) method.

Don’t let the complex name intimidate you. Essentially, QJL acts as a microscopic error-correction layer. It uses just a single bit (+1 or -1) to represent and tweak the vectors, ensuring that the critical semantic relationships between words aren’t lost in the compression. It makes sure the AI’s “attention” mechanism stays dead-on accurate.

Real-World Testing: No Retraining Required

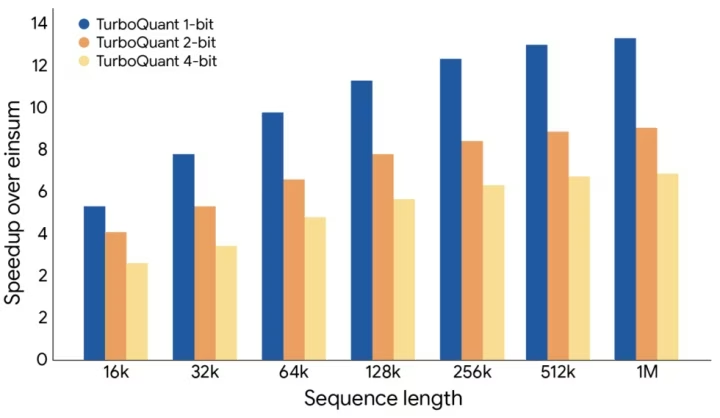

Concepts are great, but the actual benchmark numbers are what really blew my mind. Google didn’t just test this in a vacuum; they ran TurboQuant on popular open-weight models like Gemma and Mistral.

Here are the hard facts from their tests:

- 6x Memory Reduction: The KV cache memory requirement dropped by a factor of six.

- 3-Bit Compression: It can compress the cache down to just 3 bits per parameter.

- Zero Retraining: This is huge for developers. You can apply TurboQuant to existing models without having to spend millions of dollars retraining them from scratch.

- Blazing Speed: When tested on an Nvidia H100 GPU, the 4-bit TurboQuant performed attention calculations 8 times faster than traditional 32-bit uncompressed keys.

Why I Think This is the Key to Mobile AI

While it’s easy to look at this and think about how much money cloud providers will save on server costs, I look at TurboQuant and see the future of the smartphone.

Right now, to get ChatGPT-level intelligence, your phone has to send your data to a cloud server, wait for the massive computers to do the thinking, and ping the answer back to you. It requires an internet connection, it drains battery, and it poses massive privacy concerns.

With algorithms like TurboQuant, the hardware limitations of mobile devices suddenly don’t look so intimidating. If we can compress the memory footprint by 6x and speed up the processing by 8x, running a hyper-intelligent, fully private AI natively on your smartphone isn’t a pipe dream anymore. It is imminent.

I honestly believe we are moving toward a world where the most powerful AI isn’t sitting in a data center, but resting right in your pocket.

So, I’m curious to hear your take on this. If algorithms like TurboQuant make it possible to run incredibly smart AI entirely offline on your smartphone, would you finally ditch the cloud-based apps for the sake of total privacy? Let me know your thoughts down below!